Self hosted

Open infrastructure.

Full control, made free.

Deploy MAX and Mojo on your infrastructure - one open-source container under 700MB that runs on NVIDIA, AMD, Apple & more. Write custom serving layers, models, kernels, profile workloads, and optimize every layer. Join our open source AI community today.

Why self-host with MAX+Mojo?

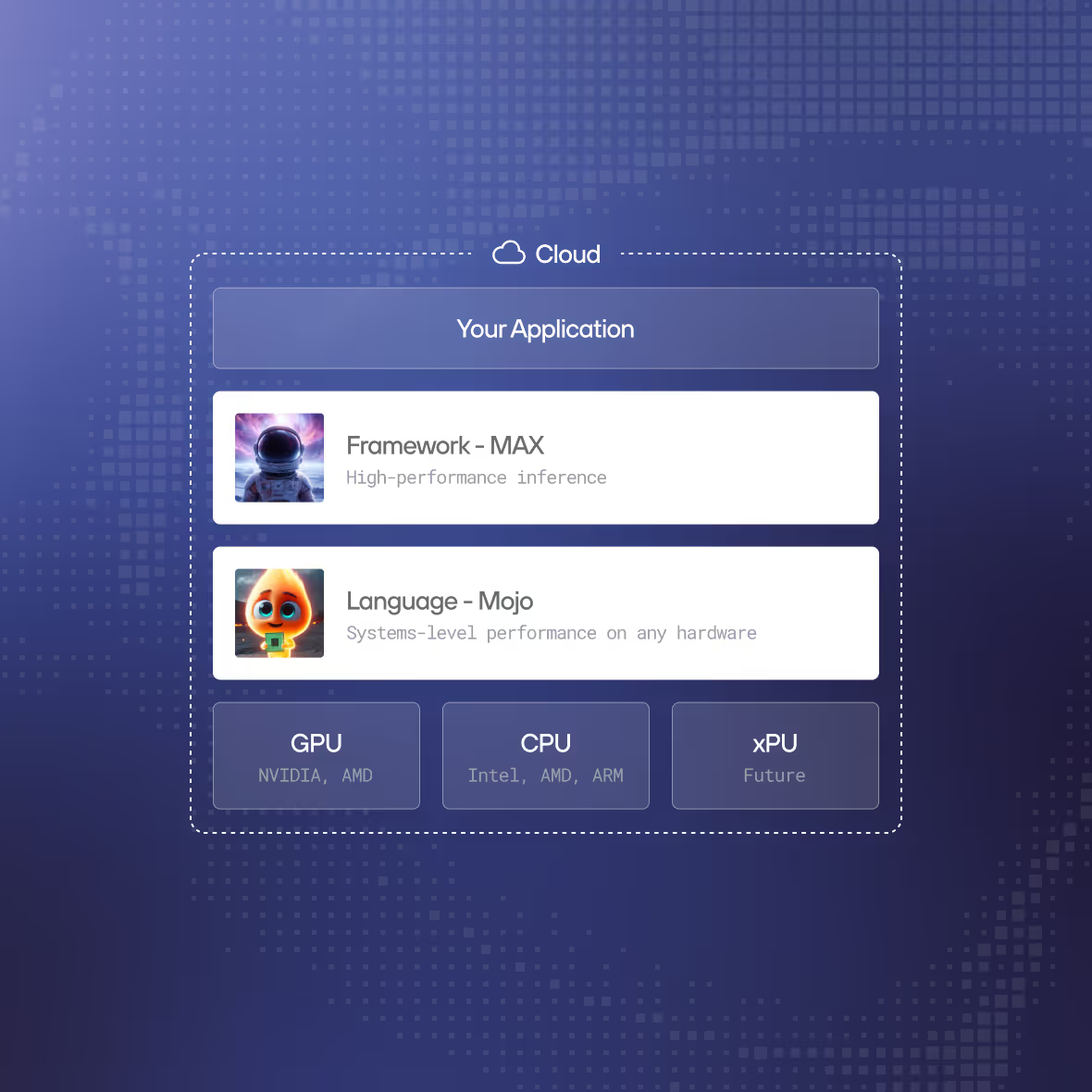

A vertically integrated AI software stack

MAX controls the full inference path - from model graph through MLIR compilation to kernel execution on hardware. No stitching together vLLM, TensorRT, and CUDA. One stack, one container, one team to work with. Every layer is optimized together, not bolted on.

FROM MODEL GRAPH TO GPU KERNEL, ONE UNIFIED STACK

Run on compute you own

NVIDIA, AMD, Apple Silicon GPUs along with Intel, ARM, AMD CPUs - MAX compiles and runs on all of them from the same container image. Use your existing reserved instances, cloud credits, and committed use discounts. AWS, GCP, Azure, OCI, on-prem. Your hardware, your cloud account.

ANY GPU. ANY CLOUD. YOUR CLOUD CREDITS.

Write your own custom models

MAX's PyTorch-like model APIs let you define and deploy proprietary architectures - novel attention mechanisms, state-space models, hybrid designs. Compile them for any supported hardware without maintaining separate codebases. Your model is your moat. Keep it yours.

YOUR ARCHITECTURE. ANY HARDWARE. NO VENDOR DEPENDENCY.

Deploy custom kernels in Mojo

Need a novel attention pattern? Custom quantization scheme? Write it in Mojo with Python-compatible syntax and get better-than-CUDA performance across NVIDIA, AMD, and Apple Silicon. 20-30 lines of Mojo replace hundreds of lines of CUDA - and they run on every accelerator.

20-30 LINES OF MOJO VS HUNDREDS OF LINES OF CUDA

Production-grade serving built in

Continuous batching, KV-cache optimization, speculative decoding, auto-hardware detection, and OpenAI-compatible API endpoints - all compiled into a single container under 700MB. No assembly required. pip install modular, then max serve.

PIP INSTALL MODULAR. MAX SERVE. YOU'RE LIVE.

Join the open-source AI revolution

MAX is open source. Mojo is open-source. Inspect the code, contribute to the project, build on top of it. No black-box runtime, no proprietary lock-in, no trust-us performance claims. You can read every kernel, every optimization pass, every line of the serving infrastructure.

OPEN SOURCE. OPEN MODELS. OPEN KERNELS. NO BLACK BOXES.

Who self-hosts with our open infrastructure?

If you’ve invested in NVIDIA and AMD hardware, Modular is the only inference platform that runs on both from one container. Maximize utilization across your entire fleet.

Defense, intelligence, and regulated financial services need inference that runs without any external connectivity. Self-hosted MAX is a single container with zero call-home dependencies.

NVIDIA, AMD, Custom Silicon clusters for inference. No other self-hosted platform runs GPU-accelerated inference on diverse hardware pools.

If your moat is a proprietary model architecture, you need self-hosted inference with kernel-level programmability. Mojo lets you write custom ops that compile for any hardware target.

MAX+Mojo vs. alternatives

- MAX+Mojo

- Hardware Portability

One container runs on NVIDIA, AMD, and Apple Silicon. Same model binary, any GPU, no separate builds.

- Write Models + Customize Serving

Write custom models, adjust layers in serving, author GPU ops in Mojo. MAX is PyTorch+vLLM combined in one.

- Open Source Community

MAX+Mojo is open source. Inspect every kernel, every optimization pass, contribute back, or fork for your own needs.

- AI Coding Skills

Incorporate into your existing AI workflows with OpenCode, Codex, Claude, Cursor and more with our AI Skills.

- Deploy Anywhere

Single container. Zero external dependencies, no control plane, no telemetry.

- Alternatives

- Runtime Wrappers

vLLM and SGLang optimize on top of PyTorch and CUDA. They can't touch what's inside the kernels or across the graph.

- Optimized for one hardware

Primarily optimized for NVIDIA. Want to run at peak performance on other silicon? Maintain a fork separately.

- Text-Only Inference

No support for image, video, or audio models out of the box. Running diffusion or TTS means separate infrastructure.

- 7GB+ Runtime Bloat

PyTorch, CUDA, NCCL, Triton - all pulled in. Slow cold starts, heavy storage overhead, and fragile at fleet scale.

- Fragile Dependency Chains

Frequent breaking changes, version conflicts, and hardware-specific bugs. No single team owns the stack.

Compare deployment options

Self-Hosted | Our Cloud | Your Cloud | |

|---|---|---|---|

Support | Active community and fast responses in Discord, Discourse, Github | Dedicated support, engineering team, standard and custom SLAs/SLOs | Dedicated support, engineering team, standard and custom SLAs/SLOs |

Models | Hundreds of models in our model repo, view top performers | Top performers available for dedicated endpoint, custom model deployment | Top performers available for dedicated endpoint, custom model deployment |

AI Skills | Use our open AI skills to easily write models, or optimize code | Our engineers can help train your team & migrate your workloads | Our engineers can help train your team & migrate your workloads |

Platform access | Deploy MAX and Mojo yourself anywhere you want. Build with open source | Access Modular Platform with a console for deploying, scaling and managing your AI endpoints. | Access Modular Platform with a console for deploying, scaling and managing your AI endpoints. |

Scalability | Scale on your own with the MAX container | Auto-scaling, scale to zero, burst capacity | Auto-scaling, proven at Fortune 500 scale. |

Deployment location | Self-deployed, anywhere | Our cloud | Your cloud or hybrid |

Compute hardware | NVIDIA, AMD, and Apple Silicon & more. Scaling restrictions apply. | NVIDIA & AMD GPUs in our cloud. More hardware coming soon. | NVIDIA & AMD GPUs, Intel, AMD & ARM CPUs - deployed in your cloud. |

Custom kernels | Your engineers write custom kernels for your workloads. | Modular engineers tune kernels for your workloads | Modular engineers write custom kernels for your workloads |

Forward Deployed Engineers | Available with support plan | Included | Included; working in your environment |

Security & Compliance | SOC 2 Type 2 certified | SOC 2 Type 2 certified | SOC 2 Type 2 certified |

Billing & Pricing | Free | Per token (shared) Per minute (dedicated) | Per minute deployed. Use your AWS/GCP/Azure credits and commits |

Enterprise Contract |

Get started with Modular

Schedule a demo of Modular and explore a custom end-to-end deployment built around your models, hardware, and performance goals.

Distributed, large-scale online inference endpoints

Highest-performance to maximize ROI and latency

Deploy in Modular cloud or your cloud

View all features with a custom demo

Book a demo

Talk with our sales lead Jay!

30min demo. Evaluate with your workloads. Ask us anything.

Book a demo for a personalized walkthrough of Modular in your environment. Learn how teams use it to simplify systems and tune performance at scale.

Custom 30 min walkthrough of our platform

Cover specific model or deployment needs

Flexible pricing to fit your specific needs

Book a demo

Talk with our sales lead Jay!

Run any open source model in 5 minutes, then benchmark it. Scale it to millions yourself (for free!).

Install Mojo and get up and running in minutes. A simple install, familiar tooling, and clear docs make it easy to start writing code immediately.